Table of Contents

Introduction

This is the second post in our series on real-time data visualization.Last week, we discussed how we progressed from relational databases to big data and real-time analytics. This week, we’ll take a deep dive into how a real-time business intelligence system works. If you’ve used a real-time dashboard before or plan to build one in the future, this post can serve as a primer to help you understand what happens behind the scenes and how real-time data reaches your dashboard.

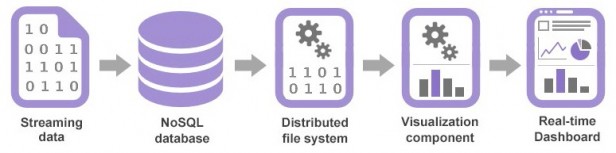

In this post, we’ll show you the four broad steps to how data is visualized in real-time using data charts, why it is important, and how it is processed. Here’s an illustration of each of these steps, which we’ll discuss further below:

What is Real-Time Analytics?

Real-time analytics involves preparing and measuring data as soon as it enters the database. This means that users can gain insights or draw conclusions immediately or rapidly after the data enters their system.Businesses can quickly identify patterns, trends, and anomalies by monitoring data streams in real-time. This enables them to respond promptly to changing conditions or take advantage of new opportunities. With the right real-time analytics platform, businesses can fulfill various needs, such as improving workflows, enhancing the relationship between marketing and sales, finalizing financial close procedures, understanding customer behavior, and more.

Benefits of Real-Time Data

Real-time data analytics helps your business thrive by boosting productivity. It cuts risks, lowers costs, and gives more profound insights into employees, customers, and your financial health.Here are several significant benefits:

- Real-time analytics enables informed decision-making with instant data access.

- Real-time analytics helps in resolving operational issues swiftly.

- Snappy apps with real-time analytics enhance user adoption with quick, seamless interactions, eliminating long loading.

- Real-time analytics is essential for time-sensitive tasks such as security, delivery, and advertising optimization.

- Automated intelligence applications reduce decision-making cognitive load, enhancing team efficiency and focusing on strategic initiatives.

How Do Real-Time Analytics Platforms Work?

To start with a real-time analytics platform, simplify the process. Break down your system into simple steps to make quick, impactful decisions.1. Streaming data is captured

Live streaming data is captured using scrapers, collectors, agents, listeners, and is stored in a database. This database is usually a NoSQL database like Cassandra, MongoDB, or sometimes even Hadoop’s Hive. Relational databases are not suited for this sort of high performance analytics, and the rise of NoSQL databases is key to enabling real-time analytics today.2. The data is stream processed

The streaming data is processed in many ways like splitting, merging, doing calculations, and connecting it with outside data sources. This is done by a fault-tolerant, distributed database system like Storm. Hadoop, which is the most common big data processing framework, is not ideal for real-time analytics due to its dependency on MapReduce’s batch-oriented processing. However, Hadoop 2.0 allows for using other computational algorithms instead of MapReduce, which opens up the possibility for Hadoop to be used in real-time systems going forward. After processing, the data is ready to be read by the visualization component.3. The processed data is read by the visualization component

The processed data is stored in a structured format, like JSON or XML, in the NoSQL database. From there, it’s read by the visualization component. In most cases, this is a charting library embedded in an internal BI system, or as part of a broader visualization platform like Tableau. The frequency at which processed data is refreshed in the JSON or XML file is termed as the update interval.4. The visualization component updates the real-time dashboard

The visualization component then reads the data from the structured data file (JSON/XML), and draws a chart, gauge, or other visualization in the reporting interface. The frequency at which processed data is drawn on client-side is called the refresh interval. In some applications, like stock trading applications, along with rendering a chart, there are pre-set rules that are triggered based on the streaming data.While this all may sound complex, what’s amazing is that the entire process takes place in seconds, or even milliseconds. This is possible because of advances in database technology, particularly NoSQL databases. It’s further helped by capable querying tools like Storm, which are exclusively meant for real-time processing. Additionally, visualization tools have matured to support these demanding scenarios, bringing together a whole ecosystem that enables real-time analytics in today’s big data applications.

What are the Latency Measures for Real-Time Analytics?

Real-time analytics latency consists of two parts:- Data Latency

- Query Latency

Data Latency

Data latency is the time it takes for the data to become ready in your database and data warehouse after an event occurs. It’s usually measured in seconds or milliseconds. Ideally, you measure latency from when an event occurs to when the data describing that event is available for querying or processing.Not all scenarios need low-latency data. For example, higher latency is acceptable for use cases like quarterly generating sales reports. But, more complex use cases like fraud detection or recommendation engines rely on real-time analytics platform or near real-time data, making low latency a priority for teams working on these products.

Query Latency

Query latency refers to the time taken to execute a query and receive a response. Applications aim to minimize query latency to provide quick, responsive user experiences. For example, one company boosted its application adoption by 350% by doubling the speed of their queries.Teams are now setting standards for sub-second query latency for their data applications. However, achieving consistently low query latency often requires extensive work, such as optimizing indexes and refining data, which can be time-consuming. This challenge can make it difficult for teams to quickly enhance and expand their analytical features.

Challenges with real-time analytics

Real-time analytics face several challenges. Some of them are mentioned below:Challenge #1: Breaking Free from the “Traditional Reports and Job” Mindset

One hurdle in real-time reporting is overcoming the mindset of scheduled reports. Instead of spending hours creating reports, real-time dashboards or specified parameters can quickly provide needed information. Some may see this shift as challenging, but offering support and training can help employees adapt to new reporting tools. Additionally, real-time CRM analytics can change job descriptions, requiring a focus on sales-oriented tasks like cross-selling and up-selling.

Challenge #2: Concerns of Data Quality

One of the hurdles in implementing real-time BI analytics services relates to data quality. Since real-time reporting aims to facilitate quick decision-making based on current data, the data must be entered accurately. Incorrect data entry can have a cascading effect, spreading inaccurate information throughout the entire company database, not just in one isolated spreadsheet.

According to a survey by Gartner, 75 percent of organizations noted that incorrect data had a negative impact on their finances, with half of them incurring additional costs to rectify the data. Poor data quality impacts various aspects of the business, not just one. Flawed data analysis and interpretation could misalign your entire business strategy. Therefore, having systems in place to ensure the highest possible data quality is critical.

Challenge #3: Data-Rich but Information-Poor

One major drawback of a real-time analytics platform is the challenge of efficiently utilizing the vast amount of available data. Ironically, the abundance of the latest information can sometimes overwhelm companies, leaving them unsure of how to use the data best. As Ralph Waldo Emerson noted in his lecture “The American Scholar,” “This time, like all times, is a very good one, if we know what to do with it,” highlighting the importance of making the most of the current situation.

Many companies lack well-defined data strategies that would allow them to exploit the benefits of real-time analytics fully. A survey of 1,600 businesses revealed that only 4 percent of companies had implemented measures to realize their data’s commercial and operational advantages.

What is Batch vs Real-Time Analytics?

Here are several differences of batch vs real-time analytics:- In batch processing, the processor is only busy when work is assigned to it, allowing for more efficient use of resources. In contrast, real-time processing requires the processor to be highly responsive and active at all times, as it processes events external to the computer system within specific deadlines.

- Real-time processing has lower latency, processing data immediately or with minimal delay, whereas batch processing has higher latency, as data is processed in batches after a delay.

- Batch processing has a lower cost per unit of data, as processing is done in batches, while real-time processing has a higher cost per unit of data, as processing must be done in real-time or near-real-time.

- Real-time processing is perfect for tasks such as fraud detection, sensor data analysis, and real-time monitoring, while batch processing is ideal for tasks like nightly data backups, report generation, and large-scale data analysis.

Use Cases for real-time analytics

- Real-time data analytics allows for adjustments to campaign parameters based on instant data access, improving targeting and success rates. For instance, adjusting messages and parameters based on real-time clicks and conversion data can directly target relevant audiences.

- Real-time analytics provides traders with a wide perspective on the market, incorporating information from financial databases, news sources, social media, and more. This enables quick buy and sell decisions, taking advantage of market conditions.

- Real-time analytics helps financial teams in back-office procedures and provides strategic insights. Accurate financial statements are crucial for informed decisions, and real-time analytics platform aids in error spotting and reducing operational risks.

- Real-time analysis enables financial institutions to approve or deny loans immediately based on up-to-date credit scores.

- Wearable devices use real-time analytics to track health statistics, such as heart rate, enabling immediate responses and potentially predicting ailments in advance, leading to life-saving interventions.

How can FusionCharts be used for Real-Time Data Visualization and Analysis?

FusionCharts is considered a top real-time analytics platform for data visualization and analysis. Its user-friendly interface lets you create your first chart in 15 minutes, eliminating any learning curve. Its consistent API simplifies the creation of complex charts and dashboards, ensuring seamless integration across different chart types. FusionCharts offers consistent themes for a uniform look and feel across all products.Along with this, FusionCharts also offers multiple installation options, including direct JavaScript, CDN, or NPM, and seamless integration with popular JavaScript libraries and back-end programming languages. Want to monitor stocks, analyze website traffic, or track IoT sensor data? FusionCharts delivers unparalleled precision and clarity in real-time data visualization and analysis.

Want to know how FusionCharts helps tackle the challenges mentioned in the previous section? Here are a few points that shed some light on it:

FusionCharts enables breaking free from traditional reporting mindsets by offering dynamic dashboards and real-time parameter specifications.

- It addresses data quality concerns through features like data validation and error-checking mechanisms.

- FusionCharts provides intuitive data filtering and drill-down capabilities to navigate vast data sets efficiently.

- It offers customizable data visualization templates and best practices to develop effective data strategies.

- With FusionCharts, organizations can transform data-rich but information-poor environments into data-driven success stories.

Conclusion

In conclusion, real-time analytics plays a vital role in businesses, enabling them to capture, process, and visualize streaming data for immediate insights and decision-making. Despite facing challenges such as scalability and resistance to change, real-time analytics platform offers significant benefits, including improved productivity and cost reduction.Moreover, its ability to adapt to changing market conditions provides businesses with a competitive edge. By leveraging real-time data, companies can make informed decisions, optimize operations, & stay ahead of the competition. This dynamic approach positions them for sustained growth and success in today’s fast-paced business landscape.

FAQs

👉 How does real-time analytics operate?Real-time analytics involves collecting, processing, and analyzing data as it’s generated, using specialized software to handle large volumes quickly. It enables businesses to make informed decisions according to up-to-date information, responding swiftly to changing conditions or events.

👉 What is the biggest challenge in data analytics?The main challenge in data analytics is handling vast amounts of data. This requires sophisticated tools and methods to extract meaningful insights while ensuring data quality and accuracy.

👉 Who benefits from real-time analytics?Real-time analytics platform is used by every business sector, from manufacturing to healthcare, public safety, customer service, and marketing. It’s also used in many business processes, from raw materials sourcing to production planning, customer service, and logistics.

👉 What are the benefits of real-time data analytics?Real-time data analytics helps businesses thrive by boosting productivity, cutting risks, lowering costs, and providing profound insights into employees, customers, and financial health.

👉 What are analytic use cases?Analytics use cases include business intelligence, customer behavior analysis, predictive modeling, marketing optimization, fraud detection, supply chain management, healthcare improvements, financial decision-making, and more.

Start Building with FusionCharts

FusionCharts offers a flexible and scalable solution for businesses of all sizes, making it easier than ever to unlock the potential of this amazing real-time analytics platform.Start your free trial today and experience the power of real-time insights!

P.S. – If you found this interesting, I recommend you get the white paper on which this series is based. It’ll allow you to read through the entire topic at once rather than in parts.